A peek into the new world of service discovery

How to proxy a service running in Kubernetes through Traefik as a load balancer and proxy.

Proxying Kubernetes services

In our last Traefik blogpost we showed how easy it is to proxy Docker containers running on a host. This time we will show how easy it is to proxy containers from a Kubernetes cluster through Traefik.

Traefik is a reverse proxy made for the new world of service discovery and is especially useful when running services in Kubernetes.

In this blogpost we will look at how we can proxy a service running in Kubernetes through Traefik as a load balancer and proxy.

We will deploy a service in Kubernetes and access it through Traefik via a DNS name. We will create an ingress rule inside Kubernetes to define our routing. This rule is then picked up by Traefik and it will configure itself automatically.

Prerequisites

You will need a running Kubernetes cluster and a machine from where the load balancer will be running. The machine that runs Traefik needs to have routes created, in order to access pods via their ClusterIP’s. It is out of scope for this article, but here is a hint:

K8S_NODES=$(kubectl get nodes | grep "Ready" | cut -d" " -f1 | tr '\n' '\t')

for node in $K8S_NODES; do

NODE_IP=$(kubectl describe node $node \

| egrep -w "Addresses" \

| tr '\n' '\t' \

| cut -d"," -f1 \

| rev \

| cut -d$'\t' -f1 \

| rev)

kubectl describe node ${node} \

| egrep -w "Name:|PodCIDR" \

| tr '\n' '\t' \

| awk -v IP=$NODE_IP '{ print "sudo route add -net " , $4 , " gw " , IP }'

done

If you add more worker-nodes, you need to add new routes for these as well.

The above example presumes that you are using CNI networking. It will not work on overlay networks. In that case, the machine running the load balancer will need to be on the same overlay network.

Deploy a deployment, service and ingress in Kubernetes

Deploy a NGINX Deployment to Kubernetes

First, we create and deployment for NGINX so that we can update it later on, scale it and keep it running.

Create a file called nginx-deployment.yaml with the following content

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: nginx-deployment

spec:

replicas: 3

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:1.7.9

ports:

- containerPort: 8The above yaml will give us a deployment, replica-set and 3 pods running nginx 1.7.9 when we run the following

> kubectl create -f nginx-deployment.yaml

deployment "nginx-deployment" created Let’s check the deployment

> kubectl get deployments

NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE

nginx-deployment 3 3 3 0 4s After a while, the “AVAILABLE=0” should change to 3, and you are ready to continue.

Now let’s verify that the pods are up and running.

> kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-deployment-4087004473-clz6x 1/1 Running 0 3m

nginx-deployment-4087004473-jwz0k 1/1 Running 0 3m

nginx-deployment-4087004473-zfkzn 1/1 Running 0 3mDeploy a NGINX service to Kubernetes

Now we need to create a service. An object in Kubernetes that holds a fixed ip and Cluster DNS for our deployment.

Create a file called nginx-service.yaml with the following content

apiVersion: v1

kind: Service

metadata:

labels:

name: nginxservice

name: nginxservice

spec:

ports:

# The port that this service should serve on.

- port: 80

# Label keys and values that must match in order to receive traffic for this service.

selector:

app: nginx

type: ClusterIPThis will create a service object in Kubernetes that holds a ClusterIp and endpoint ips to our pods. If a pod is killed, a new one will be started and the service endpoint updated.

Create the service by

> kubectl create -f nginx-service.yaml

service "nginxservice" createdCheck the service by running

> kubectl get services

NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes 10.32.0.1 <none> 443/TCP 3d

nginxservice 10.32.0.102 <none> 80/TCP 1mWe see here that the ClusterIP of our new service is 10.32.0.102.

Deploy an ingress to Kubernetes

Up until now we have been using kubernetes like we would have before the ingress object was introduced.

Now we define our routing rule from the outside world, to our NGINX service by creating a file nginx-ingress.yaml with the following yaml

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: nginxingress

spec:

rules:

- host: nginx.example.com

http:

paths:

- path: /

backend:

serviceName: nginxservice

servicePort: 80Create the ingress by running

> kubectl create -f nginx-ingress.yaml

ingress "nginxingress" createdInstalling Traefik

Now our cluster is ready. We only need to install Traefik and set it up, and to add an entry to our hosts file.

Download binary

Go to GitHub and download the latest stable release of Traefik for your architecture, and make it executable. As of now, it’s 1.1.2.

https://github.com/containous/traefik/releases/latest

wget https://github.com/containous/traefik/releases/download/v1.1.2/traefik_linux-amd64

mv traefik_linux-amd64 traefik

chmod u+x traefik </code>Create a config file

Create a file in the same folder as traefik called traefik.toml with the following content

[web]

address = ":8080"

ReadOnly = true

[kubernetes] # Kubernetes server endpoint

endpoint = "http://controller.example.com:8080"

namespaces = ["default","kube-system"]The above configuration will tell Traefik to only serve content from the two namespaces “default” and “kube-system”. If you want Traefik to serve all namespaces, simply remove the line.

The [web] section tells traefik to serve a dashboard on port 8080. This is handy and will present all the services traefik is currently serving.

The [kubernetes] section describes how traefik should connect to your kubernetes kube-apiserver. In this example the kube-apiserver is listening on port 8080 at controller.example.com. If you use SSL (and listen on port 8443) you can define cert and token in these two files:

/var/run/secrets/kubernetes.io/serviceaccount/ca.crt

/var/run/secrets/kubernetes.io/serviceaccount/tokenEven if you don’t use a token or certificate, you need these two files. In that case, just created the two files without any content.

Start Traefik

Now start Traefik either manually

./traefik -c traefik.tomlor, if you want Traefik to run as a service, you can use this as a template

\

[Unit] Description=Traefik proxy server Documentation=https://github.com/containous/traefik

[Service]

ExecStart=/root/traefik/traefik \

-c /root/traefik/traefik.toml

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target </code>Add the DNS to our hosts file

In our ingress object we defined that our nginx service should be reachable through the DNS name nginx.example.com. As I don’t have a DNS server, I simply create an entry in my hosts file

Replace [load-balancer-ip] with the ip address of the machine running Traefik.

su -c 'echo "[load-balancer-ip] nginx.example.com" >> /etc/hosts'Testing our service

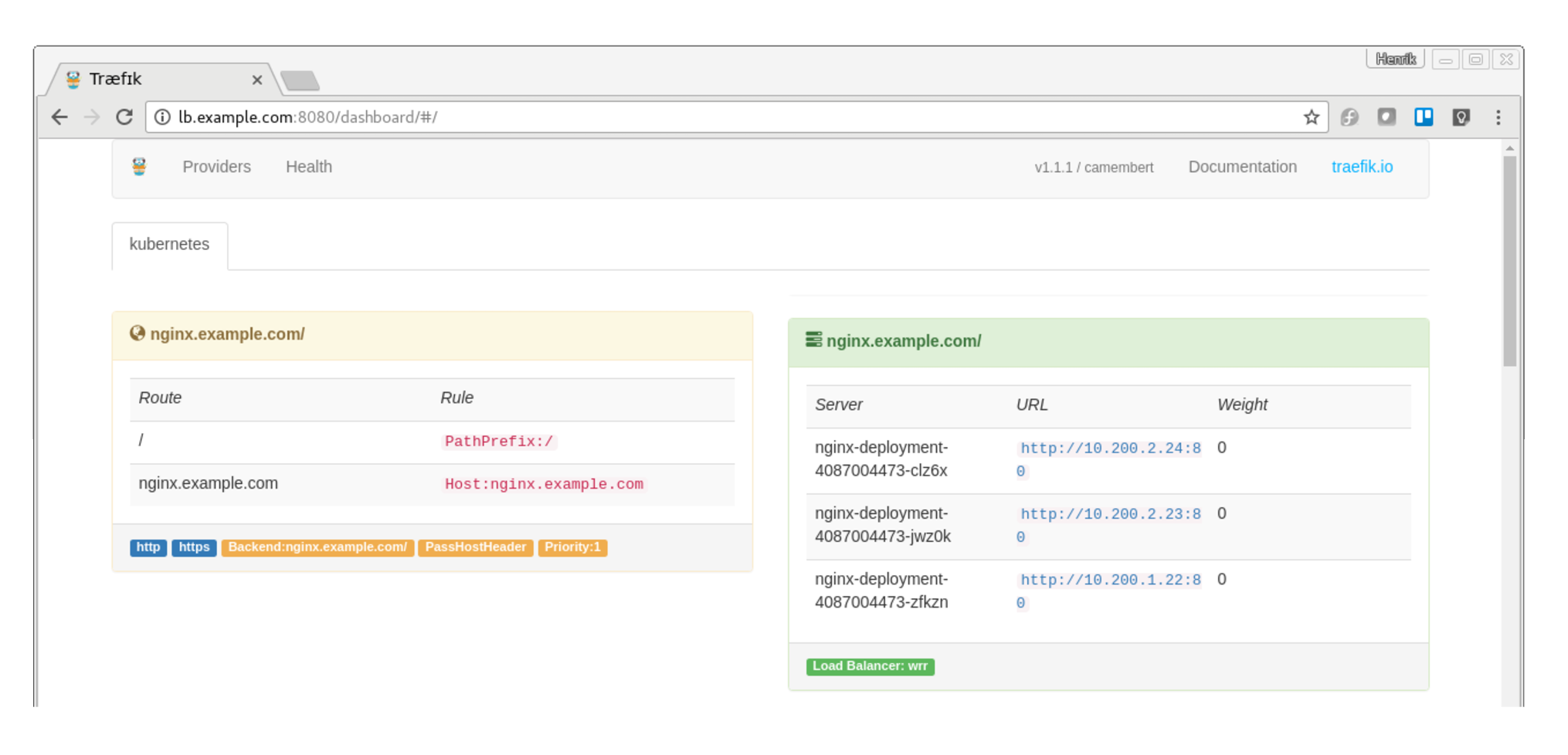

Now first go to the web dashboard of Traefik and ensure that the nginx service is listed, on http://[localbalancer-ip]:8080 You should see the dashboard and the nginx front-end and backend. The front-end describes the rule, and the backend our pods.

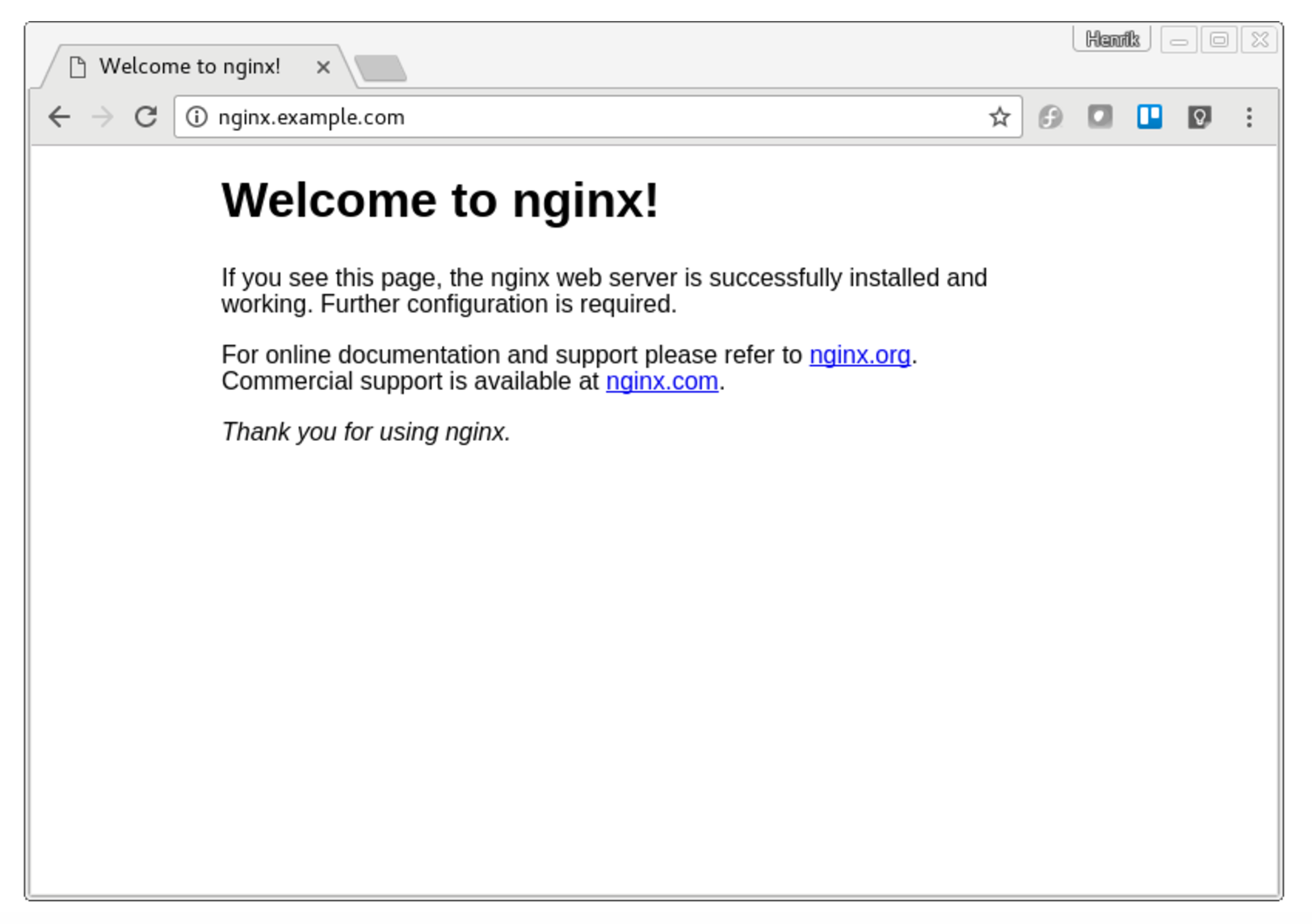

Now open a browser tab and go to http://nginx.example.com and you should see the nginx front page, served via http.

Your requests are being load balanced between the three nginx pods running in our Kubernetes cluster. Try and kill one of them, and see that you can still press F5 as much as you like. Traefik will notice, and reconfigure. When a new pod is up, replacing the killed one, it too will be served by Traefik automatically. Service discovery in its glory.

Adding SSL and http -> https redirect

The above example is fine, but not useful in production, as it only serves http requests. We want to use SSL over https. So lets see how simple that is.

Edit our traefik.toml to include http redirect to https and our certificates like this

defaultEntryPoints = ["http", "https"]

[entryPoints]

[entryPoints.http]

address = ":80"

[entryPoints.http.redirect]

entryPoint = "https"

[entryPoints.https]

address = ":443"

[entryPoints.https.tls]

[[entryPoints.https.tls.certificates]]

CertFile = "/certs/kubernetes.pem"

KeyFile = "/certs/kubernetes-key.pem"

[web]

address = ":8080"

ReadOnly = true

[kubernetes]

# Kubernetes server endpoint

endpoint = "http://controller.example.com:8080"

namespaces = ["default","kube-system"]We have added two default entry-points (http and https). In the entry-points section we set up a redirect from http to https from port 80 to 433.

Then we have a certificate section, where we define our certificate and key-file. That’s it.

Now restart traefik, either by hitting ctrl + c and re-run it, or by stopping the service and restarting it.

If Traefik is not running as a service

ctrl + c

./traefik -c traefik.tomlIf Traefik is running as a service then

sudo service traefik stop

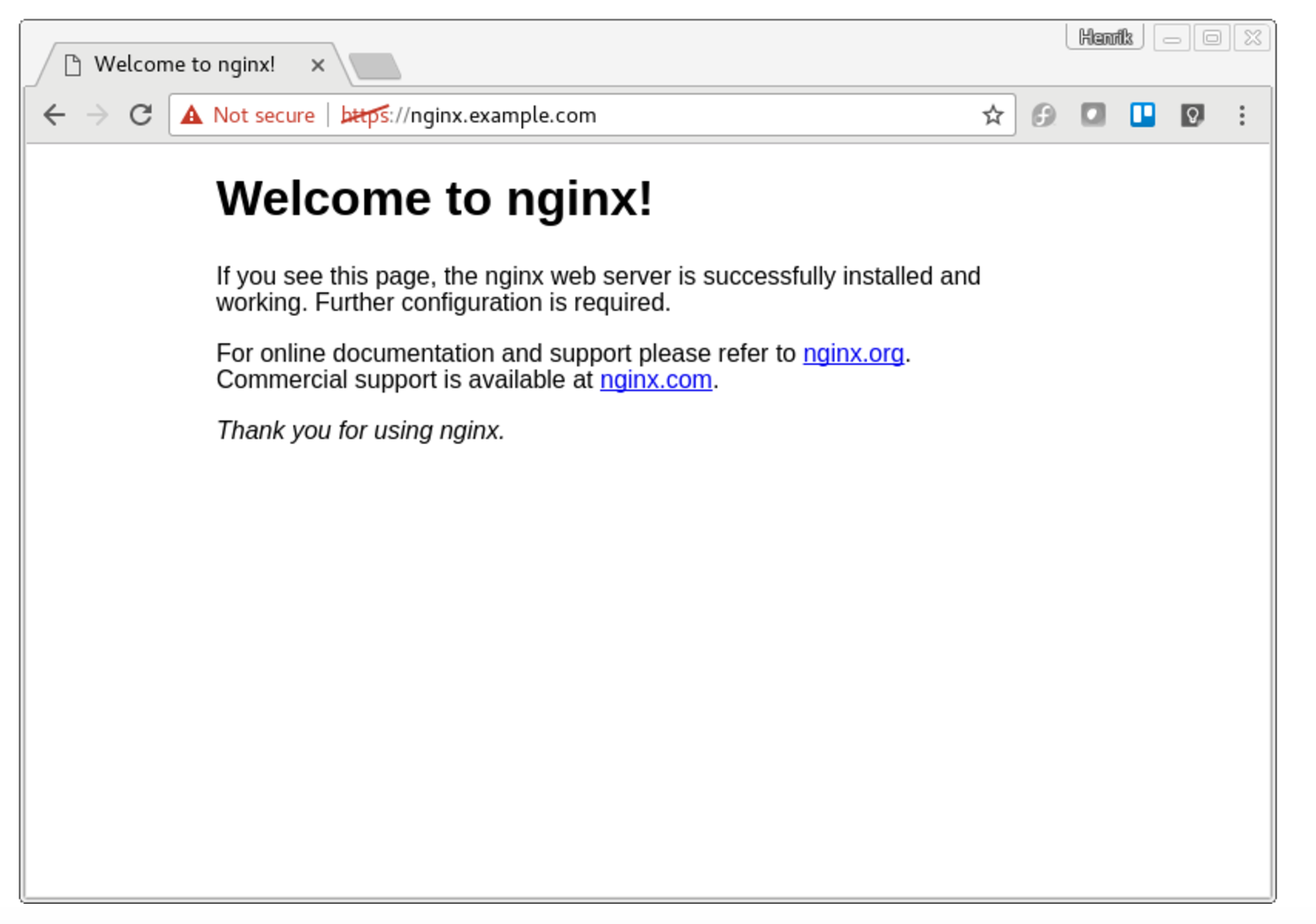

sudo service traefik startNow hit http://nginx.example.com in a browser and see that you are being redirected to https.

If you are using self signed certificates, your browser will warn you. Simply add an exception and continue.

And voila: nginx served from a Kubernetes cluster with https, with merely 20 lines of static configuration and service discovery.

Published: Mar 30, 2017

Updated: Mar 26, 2024