An in-depth comparison of two CI/CD servers: Concourse and Jenkins.

What do we need from a CI/CD system? How should we decide which one to use? In this blog we ask ourselves what a modern CI/CD system should look like and compare two commonly used build systems: Jenkins pipelines and Concourse CI.

Criteria for measuring a build engine

Users often choose a build engine such as Jenkins, Travis or VSTS for reasons which are less than rational. It can be an emotional decision or a “team-member-has-worked-with-it-before”-decision, rather than an informed one. Choosing the right CI/CD system should be based on the criteria that apply to the business and the team, rather than old habits or gut feeling.

At Eficode (formerly Praqma) we help dozens of different companies each day in their journey towards Continuous Delivery. Through this experience we have compiled a list of criteria that any CI/CD system should be able to meet.

Your company might have special needs and some criteria may be more important to you than others, so you need to decide which is the best tool for you.

We have divided our requirements up into two categories: development and operations. This post will focus on the development side of the CI server.

Development focused requirements

- Is open source.

- This way we can add custom plugins, fix bugs and understand the vision of the technology.

- Supports pipelines as code.

- Needs to support all major operating systems.

- Windows, OSX and Linux (at least Debian and Red Hat based distros)

- Able to set up a pipeline that delivers for all major programming areas

- Embedded, Web, Desktop-based and mobile

- Can be accessed locally as well as server-side.

- Can run defined steps in parallel

- Building the same source on ten OS’s should happen in parallel.

- Can store artifacts from one build step to another

- Can retrigger a pipeline step, thereby troubleshooting the issue

A closer look at Concourse and Jenkins pipelines

Our Jenkins focus lies only in the “new” pipeline jobs, as this is where Jenkins currently puts all its work. So, every time we say “Jenkins” we mean “Jenkins pipeline”.

History

Concourse

The development teams at cloudfoundry project experienced several problems with their pipelines in Jenkins. They tried to make them work, but had a hard time with the various platforms and services that CloudFoundry needs to run, and were buried in the plugin architecture that is Jenkins. This gave birth to Concourse CI, led by Alex Suraci and his team. The vision for Concourse was a more modern and less plugin driven build engine which could take advantage of newer technologies, such as containers, and make pipelines first class citizens. With CloudFoundry being open source, naturally Concourse CI is too.

Jenkins

The history of Jenkins is long and proves that the community surrounding it is vibrant and strong. It is capable of going against a large corporation if it decides on a separate vision.

It has the maturity and user-base to be able to handle practically anything you need from a platform.

That said, Jenkins is largely controlled by Cloudbees. Since 2014 the creator, Kohsuke Kawaguchi, has been CTO of Cloudbees, meaning that whatever cloudbees does, Kohsuke does, and therefore the community does as well. Cloudbees is actively transforming Jenkins to meet the new standards of CI servers.

Terminology and Architecture

Concourse

Concourse, at its core, is a container technology, but there are options for running things simply in virtual machines.

The components that make up a Concourse build system are a master, a postgresql database for persistence, and some amount of workers.

The concourse master is really ATC (short for air traffic control), which is at the heart of the system - multiple ATC can be run for high availability, as long as they use the same postgresql database. This is the pipeline orchestrator, as well as the load distributor of Concourse.

The workers are then registered via TSA (short for transportation security administration, a pun on airplane control) which is really ssh protocol, allowing the workers to connect to the master through a reverse tunnel from the TSA, where the master can then direct traffic.

Since Concourse does not allow developers to setup a pipeline from the server, you need a binary (called fly) to setup a new pipeline.

A pipeline in concourse is made of three elements - resources, resource types and jobs. In Concourse a resource is an object that you need to pull in or push out of the pipeline. Good examples include git, artifact storage and emails, but also abstract things like time, other pipelines, and server configuration.

Resource types is a way to define external resources not made by the Concourse team. These are then pulled in the same way a native resource is, and treated the same. Finally, jobs are the execution element, which builds code or runs automation on a resource.

Jenkins

The terminology of Jenkins is described as follows:

The Jenkins master is an advanced scheduler that on the basis of Job definitions monitors and executes builds on nodes. A job can be defined in many ways, as a Standard job through JobDSL or through the new Jenkins Pipeline.

In terms of architecture, Jenkins is “just” a Java-based war file for the server, and a jar file for the nodes building the jobs. It stores all configuration and logs on files, on disk, on the master. The core of Jenkins just consists of the scheduler, the communication with the nodes, and execution. The rest is left to plugins - of which there are plenty!

There are currently 1431 plugins for Jenkins which is both a strength and a weakness. The strength is that Jenkins have become the swiss army knife of CI.

If you have a need for Jenkins to do something for you, chances are that somebody has made that plugin! But the plethora of plugins also comes at a price in that not all of them are of production-ready quality, or work with the new Pipeline concept.

Because Jenkins has been around for way longer than containers it focuses on putting the build on a node, rather than in a container. It does have decent support for containers, both directly from shell, or through a plugin, making it easy to work with, and get logs from.

Hello world

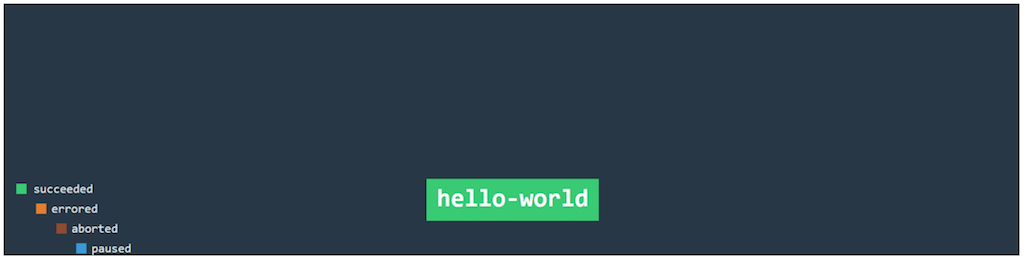

Concourse

Concourse yml is normally split into multiple files, but for easier reading it has all been inlined below:

jobs:

- name: hello-world

public: true

plan:

- task: hello-world

config:

platform: linux

image_resource:

type: docker-image

source: {repository: ubuntu}

run:

path: sh

args:

- -exc

- |

echo "hello world"

This will simply create a single job, with no resources and no inputs to the job, and echo “hello world”. Triggering it can be done through the web interface, or by using the command line interface called fly: fly -t yourconcourses execute --config tests.yml

This can be used on any task in the pipeline giving the developer full access to a job using their local binaries/files (also further downstream with later jobs).

Jenkins

Jenkins have two flavors of Pipeline DSL, declarative and scripted pipeline.

For both of them, you need to set up a pipeline job, either in the Jenkins UI or through their API. Jenkins will support the pipeline inside the VCS or written in the job definition on Jenkins. When that is done your DSL file is pretty minimal, making hello world almost a one-liner.

#Declarative pipeline

pipeline {

agent any

stages {

stage('Build') {

steps {

echo 'hello world'

}

}

}

}

#Scripted pipeline

node {

stage('Build') {

echo 'hello world'

}

}Conclusion

Winner: Both

Getting started is straightforward with both systems. If we could not get them up and running, we would not evaluate them.

Operating systems

Concourse

The way you create a Concourse worker or master is by downloading the Concourse binary, and giving it either the “worker” or “master” argument. The process is the same whether your running Windows, Linux or OS X, and this is possible because Concourse is written in GoLang.

However, all the native resources, as well as most of the community resources, run in containers so we have some additional implications to consider. This means that a typical setup with Windows will still have at least one Linux worker to access resources. Furthermore, while Windows is making containers work, Concourse has it as a feature in the future, so a Windows worker always runs its processes in a virtual machine, and separates by folder structure rather than how a container would.

For a pipeline job running windows check out our Git Phlow windows job - and it is done similarly for OSX (however often it is simply easier to use linux slaves).

A good example can be found here (note the ‘platform: darwin’) which is taken from David Karlsson’s blog about setting up Concourse for mobile and OSX development.

Jenkins

Jenkins nodes run on either bare metal servers or VMs. As long as there is a JVM written for that OS, it runs. How you describe your node environment is up to a third party tool like Ansible, Chef, Puppet, or by you manually installing a server (If you do, think about automating that part!). The way you add nodes to your farm is either through the UI of the master, or by using the Jenkins Swarm plugin that makes the nodes contact their master.

Conclusion

Winner: Inconclusive

While there are clear benefits to using containers, it also adds a certain amount of complexity. The JVM is simple to set up and runs everywhere, but is more limited.

Pipelines: from embedded to mobile

Concourse

Concourse is incredibly well geared toward web, mobile and desktop development. Embedded, on the other hand, tends to have a lot of the FPGA tools running on Windows, and while it is not a hindrance for Concourse you get none of the benefits of the technology.

The linux tools (like NT FPGA) are quite GUI heavy which causes similar issues. Concourse is at its strongest when taking advantage of containers, and processes that are not cheap should not use containers. If a build takes half an hour, then the fact that containers are cheap to rerun on failure does not help much.

For a lot of embedded, it is necessary to lock your resources to ensure build flow, and normally Concourse is supposed to be purely atomic processes.

Jenkins

As stated above

if it has a JVM, it runs Jenkins.

So, in that regard, it runs every pipeline evenly, whether it is Mobile apps (example here and here, Haskell compiler, or embedded FPGA development.

When working with a scarce resource, the Lockable Resources plugin presents a really effective way of delegating the resource cross nodes.

The code for locking a resource is really easy and straightforward:

echo 'Starting'

lock('my-resource-name') {

echo 'Do something here that requires unique access to the resource'

// any other build will wait until the one locking the resource leaves this block

}

echo 'Finish'You can even change the order resources get allocated to reverse the FIFO queue:

lock(resource: 'staging-server', inversePrecedence: true) {

node {

servers.deploy 'staging'

}

input message: "Does ${Url}staging/ look good?"

}Conclusion

Winner: Jenkins

Jenkins is the winner here. It runs everywhere, and it is simple to lock resources which for many people is a must.

Developer initiated work

Concourse

Concourse allows the developers to execute jobs on the server from their own terminal by running:

fly -t myserver execute --config myjob.ymlIt will then use whatever local input is given and run the job! This is really helpful because it allows developers to debug their code without having to go through the pipeline.

The only necessity for this is a Concourse server which developers can target that then runs the job in a contained space, on a given slave, matching the specifications.

Jenkins

In this area Jenkins is not even present. We need a server-defined job in order to run something on the build servers. Period.

So you need to make the git round-trip in order to test something:

Git push --> Jenkins pull --> Jenkins build --> Jenkins response --> Repeat

But, with multibranch pipeline , you can change the pipeline acording to your needs, and push it to a non-master branch to see the execution.

In an enterprise setup one could maybe make the argument that ”you should not use resources on something that does not have a proper pipeline”.

I must admit that I would love to be able to submit a job to the server for execution. It would be an excellent feature to have.

Conclusion

Winner: Concourse

Concourse is showing the way when it comes to developer initiated pipeline execution. So Jenkins, you need to step up the game here!

Parallelizing your pipeline

Concourse

Support exists for both individual jobs (aggregate ), resources and entire pipelines to run in parallel. When a resource has a change it can trigger all the jobs that depend on it, and if the resource changes again a moment later it will run the job in parallel with the new input.

Again our git phlow has good examples of this used in production code.

- name: afterburner

plan:

- aggregate:

- get: praqma-tap

- get: git-phlow #contains the formula update script

- get: gp-version

passed: [takeoff]

- get: phlow-artifact-darwin-s3

passed: [takeoff]

trigger: true

- task: brew-release

file: git-phlow/ci/brew/brew.yml

on_failure:

put: slack-alert

params:

text: |

brew release failed https://concourse.bosh.praqma.cloud/teams/$BUILD_TEAM_NAME/pipelines/$BUILD_PIPELINE_NAME/jobs/$BUILD_JOB_NAME/builds/$BUILD_NAME

- put: praqma-tap

params:

repository: updated-praqma-tapJenkins

Jenkins supports this natively in both DSL flavors. It makeS a lightweight job on the master to coordinate the builds. This means that all parallel executions in one stage need to finish before running the next stage.

Below are examples of how a parallel execution list can look in both scripted and declarative.

Jenkinsfile (Scripted Pipeline)

stage('Test') {

parallel linux: {

node('linux') {

try {

sh 'run-tests.sh'

}

finally {

junit '**/target/*.xml'

}

}

},

windows: {

node('windows') {

try {

sh 'run-tests.bat'

}

finally {

junit '**/target/*.xml'

}

}

}

}Jenkinsfile (Declarative Pipeline)

pipeline {

agent none

stages {

stage('Test') {

parallel {

stage('Windows') {

agent {

label "windows"

}

steps {

bat "run-tests.bat"

}

post {

always {

junit "**/TEST-*.xml"

}

}

}

stage('Linux') {

agent {

label "linux"

}

steps {

sh "run-tests.sh"

}

post {

always {

junit "**/TEST-*.xml"

}

}

}

}

}

}

} Conclusion

Winner: Concourse

While not by a big margin, Concourse takes this one.

When running tasks in parallel in Jenkins, you need to have them all done, before branching out again. Concourse has a much looser defition, and can therefore depend on arbitrary conditions.

Artifact handling

Concourse

The workflow in Concourse is very strict about the lines between resources and jobs. A job can consist of multiple tasks which can share artifacts, but a job can only share an artifact with another job if the resource is pushed out and stored in between the jobs. Concourse itself never stores artifacts and it enforces this pull/push mentality to ensure atomic jobs.

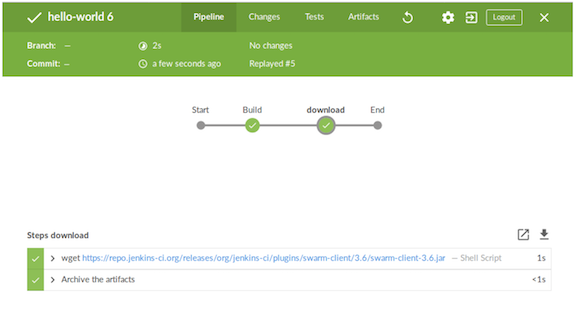

Jenkins

Jenkins can store artifacts on the master by using the archive keyword, but generally we recommend using a dedicated artifact handling system like Artifactory for that need.

For transferring files from one node to another in the same pipeline script eg. when you need to transfer your web application to your stresstest environment, you can use the stash/unstash feature, which will serve the artifacts to the given node when needed.

Conclusion

Winner: Jenkins

While both have 3.rd party capabilities, Jenkins also has build-in support for storing artifacts on the master.

Now, the real question here, do you really want your CI/CD system to be your artifact management system as well?

Retriggering pipelines

Concourse

This is as simple as either going to the web client and clicking the ‘+’ in a job, or running it again from the fly command line interface. It will then attempt to run the same process as previously, with the same inputs (or new ones, if they updated).

Jenkins

You can retrigger a whole pipeline at any time, with the same parameters.

Triggering a stage inside a pipeline is (to my great frustration) a Jenkins Enterprise only feature. However, Cloudbees are working on a feature for declarative pipelines, but not for the more advanced scripted ones. To me this a very disappointing move.

Conclusion

Winner: Concourse

As Concourse is having each job in a pipeline as an atomic action, retriggering is a no-brainer. Jenkins needs to (re)implement this feature to be on par with concourse.

Final Conclusion

And the winner is…..

Well, to be frank, there is no such conclusion, because it all depends on what you value most in your setup. Jenkins is the defacto-standard with our customers, but Concourse has merits that make it a worthy competitor.

Published: Jan 16, 2018

Updated: Mar 26, 2024